(This post was initially published as an article on my LinkedIn Newsletter - here - please see that version for comments and discussion)

GDP isn't a particularly good measure of the true health of a country's economy. Most economists and politicians know this.

This isn't a plea for non-financial measures such as "national happiness". It's a numerical issue.

GDP is hard to measure, with definitions that vary widely by country.

Important aspects of the modern world such as "free" online services and

family-provided eldercare aren't really counted properly.

However,

people won't abandon GDP, because they like comparable data with a long

history. They can plot trends, curves, averages... and don't need to

revise spreadsheets and models from the ground up with something new.

Other metrics are linked to GDP - R&D intensity, NATO military

spending commitments and so on - which would needed to be re-based if a

different measure was used. The accounting and political headaches would

be huge.

A poor metric often has huge inertia and high switching costs.

Telecoms

is no different, like many sub-sectors of the economy. There are many

old-fashioned metrics that are really not fit for purpose any more - and

even some new ones that are badly-conceived. They often lead to poor

regulatory decisions, poor optimisation and investment approaches by

service providers, flawed incentives and large tranches of

self-congratulatory overhype.

Some of the worst telecoms metrics I see regularly include:

- Voice traffic measured in minutes of use (or messages counted individually)

- Cost per bit (or increasingly energy use per bit) for broadband

- $ per MHz per POP (population) for radio spectrum auctions

- ARPU

- CO2 savings "enabled" by telecom services, especially 5G

That's

not an exhaustive list by any means. But the point of this article is

to make people think twice about commonplace numbers - and ideally think

of meaningful metrics rather than easy or convenient ones.

The

sections below gives some quick thoughts on why these metrics either

won't work in the future - or are simply terrible even now and in the

past.

(As an aside, if you ever see numbers - especially forecasts

- with too many digits and "spurious accuracy", that an immediate red

flag: "The Market for Widgets will be $27.123bn in 2027". It

tells you that the source really doesn't understand numbers - and you

really shouldn't trust, or base decisions, on someone that

mathematically inept)

Minutes and messages

The

reason we count phone calls in minutes (rather than, say, conversations

or just a monthly access fee) is based on an historical accident.

Original human switchboard operators were paid by the hour, so a

time-based quantum made the most sense for billing users. And while many

phone plans are now either flat-rate, or use per-second rates, many

regulations are still framed in the language of "the minute". (Note: some long-distance calls were also based on length of cable used, so "per mile" as well as minute)

This

is a ridiculous anachronism. We don't measure or price other

audiovisual services this way. You don't pay per-minute for movies or

TV, or value podcasts, music or audiobooks on a per-minute basis. Other

non-telephony voice communications modes such as push-to-talk, social

audio like ClubHouse, or requests to Alexa or Siri aren't time-based.

Ironically, shorter calls are often more valuable to people. There's a fundamental disconnect between price and value.

A

one-size-fits-all metric for calls stops telcos and other providers

from innovating around context, purpose and new models for voice

services. It's hard to charge extra for "enhanced voice" in a dozen

different dimensions. They should call on governments to scrap

minute-based laws and reporting requirements, and rejig their own

internal systems to a model that makes more sense.

Much.

the

same

argument...

....

applies to counting individual messages/SMS as well. It's a meaningless

quantum that doesn't align with how people use IMs / DMs / group chats

and other similar modalities. It's like counting or charging for

documents by the pixel. Threads, sessions or conversations are often

more natural units, albeit harder to measure.

Cost per bit

"5G costs less per bit than 4G". "Traffic levels increase faster than revenues!".

Cost-per-bit

is an often-used but largely meaningless metric, which drives poor

decision-making and incentives, especially in the 5G era of multiple

use-cases - and essentially infinite ways to calculate the numbers.

Different

bits have very different associated costs. A broad average is very

unhelpful for investment decisions. The cost of a “mobile” bit (for an

outdoor user in motion, handing off from cell to cell) is very different

to an FWA bit delivered to a house’s external fixed antenna, or a

wholesale bit used by an MVNO.

Costs can vary massively by

spectrum band, to a far greater degree than technology generation - with

the cost of the spectrum itself a major component. Convergence and

virtualisation means that the same costs (eg core and transport

networks) can apply to both fixed and mobile broadband, and 4G/5G/other

wireless technologies. Uplink and downlink bits also have different

costs - which perhaps should include the cost of the phone and power it

uses, not just the network.

The arrival of network slicing (and

URLLC) will mean “cost per bit” is an ever-worse metric, as different

slices will inherently be more or less "expensive" to create and

operate. Same thing with local break-out, delivery of content from a

nearby edge-server or numerous other wrinkles.

But in many ways,

the "cost" part of cost/bit is perhaps the most easy to analyse, despite

the accounting variabilities. Given enough bean-counters and some

smarts in the network core/OSS, it would be possible to create some

decent numbers at least theoretically.

But the bigger problem is

the volume of bits. This is not an independent variable, which flexes up

and down just based on user demand and consumption. Faster networks

with more instantaneous "headroom" actually create many more

bits, as adaptive codecs and other application intelligence means that

traffic expands to fill the space available. And pricing strategy can

basically dial up or down the number of bits customers used, with

minimal impact on costs.

A video application might automatically

increase the frame rate, or upgrade from SD to HD, with no user

intervention - and very little extra "value". There might be 10x more

bits transferred for the same costs (especially if delivered from a

local CDN). Application developers might use tools to predict available

bandwidth, and change the behaviour of their apps dynamically.

So -

if averaged costs are incalculable, and bit-volume is hugely elastic,

then cost/bit is meaningless. Ironically, "cost per minute of use" might

actually be more relevant here than it is for voice calls. At the very

least, cost per bit needs separate calculations for MBB / FWA / URLLC,

and by local/national network scale.

(By a similar argument, "energy consumed per bit" is pretty useless too).

Spectrum prices for mobile use

The

mobile industry has evolved around several generations of technology,

typically provided by MNOs to consumers. Spectrum has typically been

auctioned for exclusive use on a national / regional basis, in

fixed-sized slices in chunks perhaps 5/10/20MHz wide, with licenses

often specifying rules on coverage of population.

For this reason,

it's not surprising that a very common metric is "$ per MHz / Pop" -

the cost per megahertz, per addressable population in a given area.

Up

to a point, this has been pretty reasonable, given that the main use of

2G, 3G and even 4G has been for broad, wide-area coverage for

consumers' phones and sometimes homes. It has been useful for investors,

telcos, regulators and others to compare the outcomes of auctions.

But

for 5G and beyond (actually the 5G era, rather than 5G specifically),

this metric is becoming ever less-useful. There are three problems here:

- Growing

focus on smaller areas of licenses: county-sized in CBRS in the US, and

site-specific in Germany, UK and Japan for instance, especially for

enterprise sites and property developments. This makes comparisons much

harder, especially if areas are unclear.

- Focus of 5G and private

4G on non-consumer applications and uses. Unless the idea of

"population" is expanded to include robots, cars, cows and IoT gadgets,

the "pop" part of the metric clearly doesn't work. As the resident

population of a port or offshore windfarm zone is zero, then a local

spectrum license would effectively have an infinite $ / MHz / Pop.

- Spectrum

licenses are increasingly being awarded with extra conditions such as

coverage of roads, land-area - or mandates to offer leases or MVNO

access. Again, these are not population-driven considerations.

Over

the next decade we will see much greater use of mobile

spectrum-sharing, new models of pooled ("club") spectrum access, dynamic

and database-driven access, indoor-only licenses, secondary-use

licenses and leases, and much more.

Taken together, these issues are increasingly rendering $/MHz/Pop a legacy irrelevance in many cases.

ARPU

"Average

Revenue Per User" is a longstanding metric used in various parts of

telecoms, but especially by MNOs for measuring their success in selling

consumers higher-end packages and subcriptions. It has long come under

scrutiny for its failings, and various alternatives such as AMPU (M for

margin) have emerged, as well as ways to carve out dilutive "user"

groups such as low-cost M2M connections. There have also been attempts

to distinguish "user" from "SIM" as some people have multiple SIMs,

while other SIMs are shared.

At various points in the past it used

to "hide" effective loan repayments for subsidised handsets provided

"free" in the contract, although that has become less of an issue with

newer accounting rules. It also faces complexity in dealing with

allocating revenues in converged fixed/mobile plans, family plans, MVNO

wholesale contracts and so on.

A similar issue to "cost per bit"

is likely to happen to ARPU in the 5G era. Unless revenues and user

numbers are broken out more finely, the overall figure is going to be a

meaningless amalgam of ordinary post/prepaid smartphone contracts, fixed

wireless access, premium "slice" customers and a wide variety of new

wholesale deals.

The other issue is that ARPU further locks

telcos into the mentality of the "monthly subscription" model. While

fixed monthly subs, or "pay as you go top-up" models still dominate in

wireless, others are important too, especially in the IoT world. Some

devices are sold with connectivity included upfront.

Enterprises

buying private cellular networks specifically want to avoid per-month or

per-GB "plans" - it's one of the reasons they are looking to create

their own dedicated infrastructure. MNOs may need to think in terms of

annual fees, systems integration and outsourcing deals, "devices under

management" and all sorts of other business models. The same is true if

they want to sell "slices" or other blended capabilities - perhaps

geared to SLAs or business outcomes.

Lastly - what is a "user" in future? An individual human with a subscription? A family? A home? A group? A device?

ARPU is another metric overdue for obsolescence.

CO2 "enablement" savings

I posted last week

about the growing trend of companies and organisations to cite claims

that a technology (often 5G or perhaps IoT in general) allows users to

"save X tons of CO2 emissions".

You know the sort of thing - "Using augmented reality conferencing on your 5G phone for a meeting avoids the need for a flight & saves 2.3 tons of CO2" or whatever. Even leaving aside the thorny issues of Jevon's Paradox, which means that efficiency tends to expand usage rather than replace it - there's a big problem here:

Double-counting.

There's

no attempt at allocating this notional CO2 "saving" between the

device(s), the network(s), the app, the cloud platform, the OS & 100

other elements. There's no attempt such as "we estimate that 15% of this is attributable to 5G for x, y, z reasons".

Everyone takes 100% credit. And then tries to imply it offsets their own internal CO2 use.

"Yes,

5G needs more energy to run the network. But it's lower CO2 per bit,

and for every ton we generate, we enable 2 tons in savings in the wider

economy".

Using that logic, the greenest industry on the

planet is industrial sand production, as it's the underlying basis of

every silicon chip in every technological solution for climate change.

There's

some benefit from CO2 enablement calculations, for sure - and there's

more work going into reasonable ways to allocate savings (look in the

comments for the post I link to above), but readers should be

super-aware of the limitations of "tons of CO2" as a metric in this

context.

So what's the answer?

It's

fairly easy to poke holes in things. It's harder to find a better

solution. Having maintained spreadsheets of company and market

performance and trends myself, I know that analysis is often held

hostage by what data is readily available. Telcos report minutes-of-use

and ARPU, so that's what everyone else uses as a basis. Governments may

demand that reporting, or frame rules in those terms (for instance,

wholesale voice termination rates have "per minute" caps in some

countries).

It's very hard to escape from the inertia of a long

and familiar dataset. Nobody want to recreate their tables and try to

work out historic comparables. There is huge path dependence at play -

small decisions years ago, which have been entrenched in practices in

perpetuity, even though the original rationale has long since gone. (You may have noticed me mention path dependence a few times recently. It's a bit of a focus of mine at the moment....)

But

there's a circularity here. Certain metrics get entrenched and nobody

ever questions them. They then get rehashed by governments and

policymakers as the basis for new regulations or measures of market

success. Investors and competition authorities use them. People ignore

the footnotes and asterisks warning of limitations

The first thing

people should do is question the definitions of familiar public or

private metrics. What do they really mean? For a ratio, are the

assumptions (and definitions) for both denominator and numerator still

meaningful? Is there some form of allocation process involved? Are there

averages which amalgamate lots of dissimilar categories?

I'd certainly recommend Tim Harford's book "How to Make the World Add Up" (link) as a good backgrounder to questioning how stats are generated and sometimes misused.

But

the main thing I'd suggest is asking whether metrics can either hide

important nuance - or can set up flawed incentives for management.

There's

a long history of poor metrics having unintended consequences. For

example, it would be awful (but not inconceivable) to raise ARPUs by

cancelling the accounts of low-end users. Or perhaps an IoT-focused

vertical service provider gets punished by the markets for "overpaying"

for spectrum in an area populated by solar panels rather than people.

Stop and question the numbers. See who uses them / expects them and persuade them to change as well. Point out the fallacies and flawed incentives to policymakers.

If

you have any more examples of bad numbers, feel free to add them in the

comments. I forecast there will be 27.523 of them, by the end of the

year.

The author is an industry analyst and strategy advisor

for telecoms companies, governments, investors and enterprises. He often

"stress-tests" qualitative and quantitative predictions and views of

technology markets. Please get in touch if this type of viewpoint and

analysis interests you - and also please follow @disruptivedean on

Twitter.

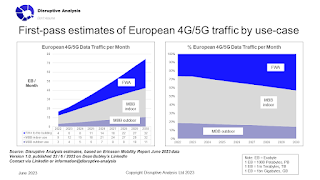

- Collect more granular data, or make reasoned estimates, of breakdowns of data traffic in your country & trends over time. As well as #FWA vs #MBB & indoor vs outdoor, there should be a split between rural / urban / dense & ideally between macro #RAN vs outdoor #smallcell vs dedicated indoor system. Break out rail / road transport usage.

- Develop a specific policy (or at least gather data and policy drivers) for FWA & indoor #wireless. That feeds through to many areas including spectrum, competition, consumer protection, #wholesale, rights-of-way / access, #cybersecurity, inclusion, industrial policy, R&D, testbeds and trials etc. Don't treat #mobile as mostly about outdoor or in-vehicle connectivity.

- View demand forecasts of mobile #datatraffic and implied costs for MNO investment / capacity-upgrade through the lens of detailed stats, not headline aggregates. FWA is "discretionary"; operators know it creates 10-20x more traffic per user. In areas with poor fixed #broadband (typically rural) that's potentially good news - but those areas may have spare mobile capacity rather than needing upgrades. Remember 4G-to-5G upgrade CAPEX is needed irrespective of traffic levels. FWA in urban areas likely competes with fibre and is a commercial choice, so complaints about traffic growth are self-serving.

- Indoor & FWA wireless can be more "tech neutral" & "business model neutral" than outdoor mobile access. #WiFi, #satellite and other technologies play more important roles - and may be lower-energy too. Shared / #neutralhost infrastructure is very relevant.

- Think through the impact of detailed data on #spectrum requirements and bands. In particular, the FWA/MBB & indoor splits are yet more evidence that the need for #6GHz for #5G has been hugely overstated. In particular, because FWA is "deterministic" (ie it doesn't move around or cluster in crowds) it's much more tolerant of using different bands - or unlicensed spectrum. Meanwhile indoor MBB can be delivered with low-band macro 5G, dedicated in-building systems (perhaps mmWave), or offloaded to WiFi. Using midband 5G and MIMO to "blast through walls" is not ideal use of either spectrum or energy.

- View 5G traffic data/forecasts used in so-called #fairshare or #costrecovery debates with skepticism. Check if discretionary FWA is inflating the figures. Question any GDP impact claims. Consider how much RAN investment is actually serving indoor users, maybe inefficiently. And be aware that home FWA traffic skews towards TVs and VoD #streaming (Netflix, Prime etc) rather than smartphone- or upload-centric social #video like TikTok & FB/IG.

Telecoms regulation needs good input data, not convenient or dramatic headline stats.