Published via my LinkedIn Newsletter - see here to subscribe / see comment thread

"Telecoms" or "telecommunications" is based on the Greek prefix "tele-".

It means "at a distance, or far-off". It is familiar from its use in other terms such as telegraph, television or teleport. And for telecoms, that makes sense - we generally make phone calls to people across medium or long distances, or send then messages. Even our broadband connections generally tend to link to distant datacentres. The WWW is, by definition, worldwide.

The word "communications" actually comes from a Latin root, meaning to impart or share. Which at the time, would obviously have been done mostly through talking to other people directly, but could also have involved writing or other distance-independent methods.

This means that distant #communications, #telecoms, has some interesting properties:

- The 2+ distant ends are often (but not always) on different #networks. Interconnection is therefore often essential.

- Connecting distant points tends to mean there's a good chunk of infrastructure in between them, owned by someone other than the users. They have to pay for it, somehow.

- Because the communications path is distant, it usually makes sense for the control points (switches and so on) to be distant as well. And because there's typically payment involved, the billing and other business functions also need to be sited "somewhere", probably in a #datacentre, which is also distant.

- There are a whole host of opportunities and risks with distant communications, that mean that governments take a keen interest. There are often licenses, regulations and internal public-sector uses - notably emergency services.

- The infrastructure usually crosses the "public domain" - streets, airwaves, rooftops, dedicated tower sites and so on. That brings additional stakeholders and rule-makers into the system.

- Involving third parties tends to suggest some sort of "service" model of delivery, or perhaps government subsidy / provision.

- Competition authorities need to take into account huge investments and limited capacity/scope for multiple networks. That also tends to reduce the number of suppliers to the market.

That is telecommunications - distant communications.

But now consider the opposite - nearby communications.

Examples could include a private 5G network in a factory, a LAN in an office, a WiFi connection in the home, a USB cable, or a Bluetooth headset with a phone. There are plenty of other examples, especially for IoT.

These nearby examples have very different characteristics to telecoms:

- Endpoints are likely to be on the same network, without interconnection

- There's usually nobody else's infrastructure involved, except perhaps a building owner's ducts and cabinets.

- Any control points will generally be close - or perhaps not needed at all, as the devices work peer-to-peer.

- There's relatively little involvement of the "public domain", unless there are risks like radio interference beyond the network boundaries.

- It's not practical for governments to intervene too much in local communications - especially when it occurs on private property, or inside a building or machine.

- There might be a service provider, but equally the whole system could be owned outright by the user, or embedded into another larger system like a robot or vehicle.

- Competition is less of an issue, as is supplier diversity. You can buy 10 USB cables from different suppliers if you want.

- Low-power, shared or unlicensed spectrum is typical for local #wireless networks.

I've been trying to work out a good word for this. Although "#telecommunications" is itself an awkward Greek / Latin hybrid I think the best prefix might be Greek again - "peri" which means "around", "close" or "surrounding" - think of perimeter, peripheral, or the perigee of an orbit.

So I'm coining the term pericommunications, to mean nearby or local connectivity. (If you want to stick to all-Latin, then proxicommunications would work quite well too).

Just because a company is involved in telecoms does not mean it necessarily can expect a role in pericoms as well. (Or indeed, vice versa). It certainly can participate in that market, but there may be fewer synergies than you might imagine.

Some telcos are also established and successful pericos as well. Many home broadband providers have done an excellent job with providing whole-home #WiFi systems with mesh technology, for example. In-building mobile coverage systems in large venues are often led by one telco, with others onboarding as secondary operators.

But other nearby domains are trickier for telcos to address. You don't expect to get your earbuds as an accessory from your mobile operator - or indeed, pay extra for them. Attempts to add-on wearables as an extra SIM on a smartphone account have had limited success.

And the idea of running on-premise enterprise private networks as a "slice" of the main 4G/5G macro RAN has clearly failed to gain traction, for a variety of reasons. The more successful operators are addressing private wireless in much the same way as other integrators and specialist SPs, although they can lean on their internal spectrum team, test engineers and other groups to help.

Some are now "going the extra mile" (sorry for the pun) for pericoms. Vodafone has just announced its prototype 5G mini base-station, the size of a Wi-Fi access point based on a Raspberry Pi and a Lime Microsystems radio chip. It can support a small #5G standalone core and is even #OpenRAN compliant. Other operators have selected new vendors or partners for campus 4G/5G deployments. The 4 UK MNOs have defined a set of shared in-building design guidelines for neutral-host networks.

It can be hard for regulators and policymakers to grasp the differences, however. The same is true for consultants and lobbyists. An awful lot of the suggested upsides of 5G (or other forms of connectivity) have been driven by a tele-mindset rather than a peri-view.

I could make a very strong argument that countries should really have a separate pericoms regulator, or a dedicated unit within the telecoms regulator and ministry. The stakeholders, national interests and economics are completely different.

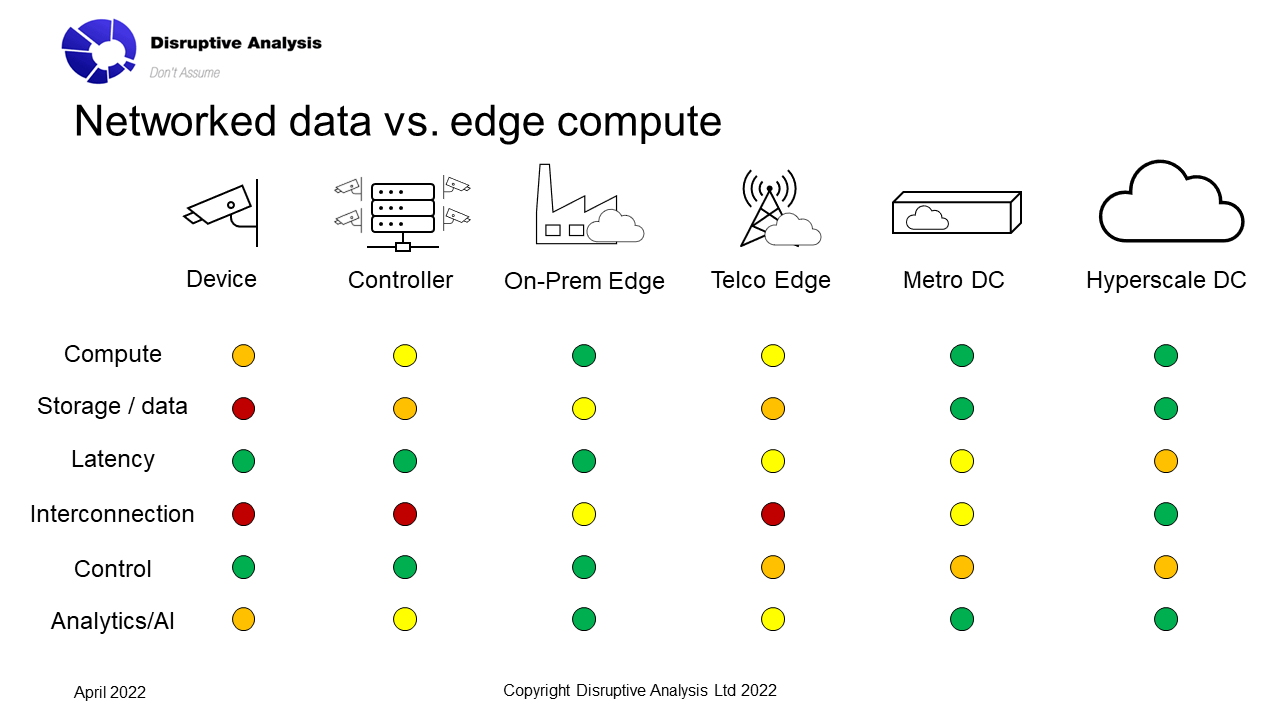

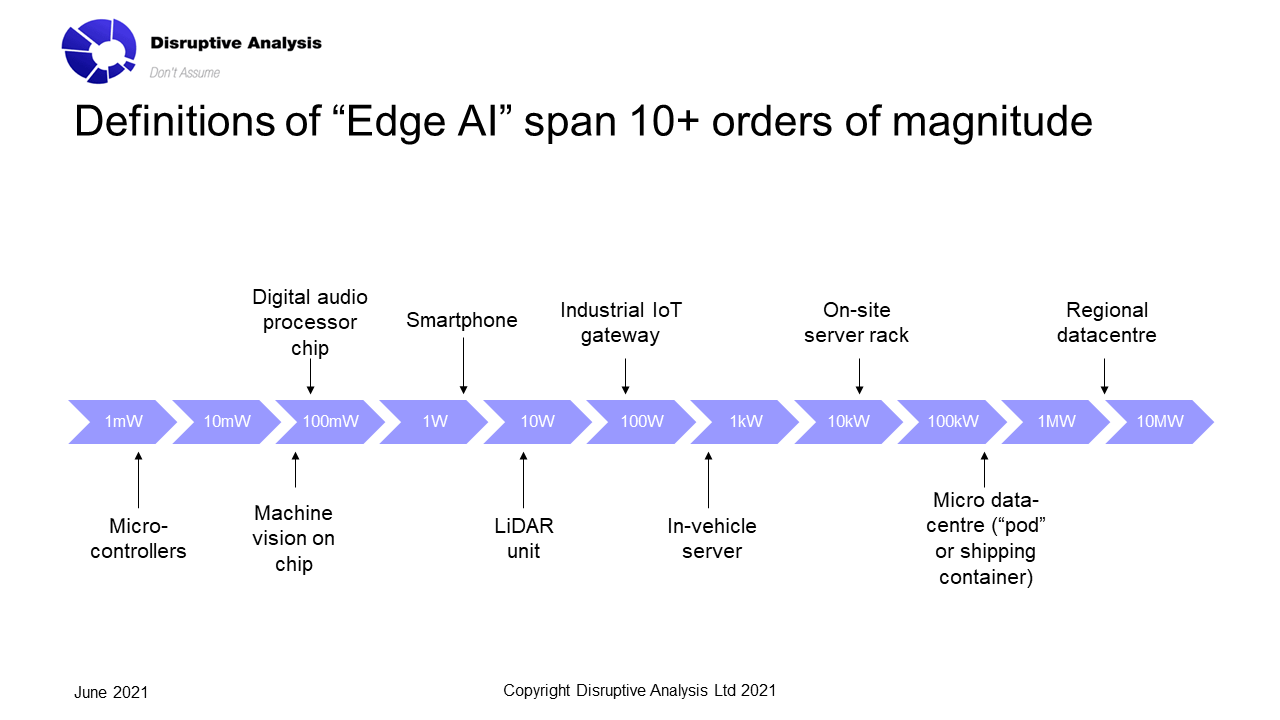

A similar set of differences can be seen in #edgecomputing: regional datacentres and telco MEC are still "tele". On-premise servers or on-device CPUs and GPUs are peri-computing, with very different requirements and economics. Trying to blur the boundary doesn't work well at present - most people don't even recognise it exists.

Overall, we need to stop assuming that #pericoms is merely a subset of #telecoms. It isn't - it's almost completely different, even if it uses some of the same underlying components and protocols.

(If this viewpoint is novel or interesting and you would like to explore it further and understand what it means for your organisation - or get a presentation or keynote about it at an event - please get in touch with me)